Something fundamental has shifted in P&C insurance. Artificial intelligence (AI) is no longer a tool that helps humans decide; it is making the decisions itself. Claims are approved or denied. Premiums are set. Fraud is flagged. And that will only increase as more insurers adopt AI. According to NAIC survey data, 88% of auto insurers and 70% of home insurers already report current or planned AI usage.

But insurers’ governance infrastructure has not kept up with how quickly AI has evolved. Despite the NAIC’s December 2023 Model Bulletin recommending regular bias and discrimination testing, many insurers still do not meet these guidelines. That gap is not a compliance footnote but an enterprise liability. A single ungoverned AI decision can cascade into bad-faith litigation, regulatory sanctions, rate-filing violations, and multi-state class actions, with potential exposure ranging from $5 million to $100 million per incident.

Carriers without an AI program do not face the greatest risk. That title goes to carriers with AI programs who think their existing controls are sufficient, running live pricing and underwriting models without generative AI (GenAI) controls, agentic governance, or decision-level audit trails.

Traditional AI Guardrails for P&C Insurance Are Not Enough

Most P&C carriers built their AI guardrails and governance programs around traditional machine learning, such as gradient boosting models, regression analysis, and anomaly detection. The controls built around them, such as SHAP explainability, annual disparate impact testing, confidence thresholds, and drift monitoring, were designed for a specific kind of AI that behaves predictably and can be interrogated after the fact.

GenAI and agentic systems operate differently. Rather than producing the same output for the same input, GenAI models generate probabilistic responses that can vary, hallucinate facts, and produce plausible-sounding but incorrect conclusions. An agentic system can autonomously chain together multiple decisions and actions without a human in the loop at each step. The failure modes are categorically different, and the governance frameworks built for traditional machine learning do not address them.

The regulatory environment is catching up faster than most carriers’ governance programs are. By late 2025, 24 states had adopted the NAIC’s AI Model Bulletin, with more expected to follow. Colorado, New York, Texas, and Californiahave each enacted state-specific requirements with material financial penalties for violations. The EU AI Act, which classifies insurance pricing and underwriting as high-risk AI, will be fully enforceable in August 2026, which is relevant for any carrier with reinsurance relationships or global operations.

For example, a leading insurer recently faced a Texas Attorney General lawsuit for using third-party behavioral data as a proxy for protected class characteristics. The exposure did not begin when the model ran; it began at the data sourcing layer, before a single decision was made. Guardrail gaps at any layer become legal exposure at every layer. This is precisely why governance cannot be treated as a model-level concern alone. Instead, it must account for how different AI types introduce different risk profiles at every stage of the decision chain, from data sourcing through deployment.

Addressing that challenge requires governance across four distinct layers:

- Legal: Anchors every model to state regulatory requirements, including unfair claims settlement practices statutes, anti-discrimination provisions, and rate filing rules. This is where proxy discrimination controls live, where third-party data relationships are vetted, and where the written AI Systems Program required by the NAIC is maintained.

- Model: Governs how the AI itself behaves, monitoring its fairness, transparency, and confidence. Controls are matched to the AI type: SHAP explainability and disparate impact testing for traditional machine learning, hallucination detection and personally identifiable information (PII) masking for GenAI, and tool-call audit logs and bounded decision authority for agentic systems.

- Operational: Ensures humans remain in the loop at the right decision points. Consequential insurance decisions must be explainable, attributable to a named decision maker, and supported by documented evidence — requirements that AI-only processing cannot satisfy without human review checkpoints.

- Audit: Guarantees that every decision can be replayed, attributed, and explained before the examiner asks, through time-stamped, immutable records of every AI-driven decision.

No layer is optional. A carrier with strong model controls but no audit trail will still fail a Department of Insurance (DOI) market conduct examination.

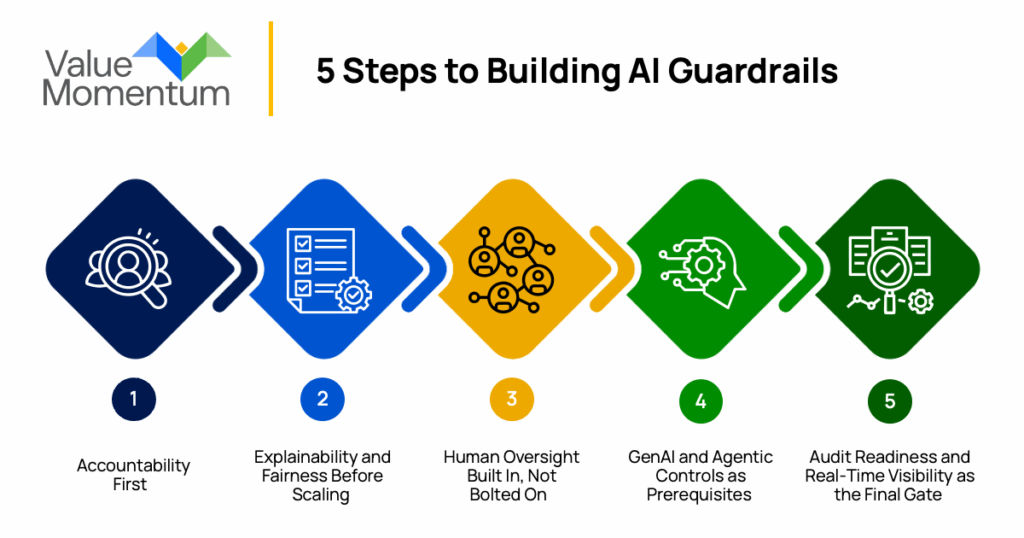

From Gates to Action: Building AI Guardrails That Hold

While the four-layer architecture defines what governance looks like, the governance gates define when a model is ready to operate within that architecture. Furthermore, the sequence in which those gates are cleared determines whether the guardrails an insurer is implementing can eliminate compliance liabilities.

1. Accountability First

Every model must have a named owner and formal approval from an AI Governance Council before deployment. This means establishing an AI model inventory and a Governance Council with real approval authority before any model goes into production.

Without this foundation, every model in production is an unassigned liability. And when something goes wrong, the absence of clear accountability compounds the exposure.

2. Explainability and Fairness Before Scaling

There must be documented evidence that model outputs can be interrogated and understood by a regulator or plaintiff attorney. Disparate impact testing must confirm the model does not produce discriminatory outcomes across protected classes, with results documented and available to regulators upon request.

These are the controls regulators will look for first, and carriers that cannot demonstrate them will face adverse findings regardless of how sophisticated their models are. The Texas case is a reminder that fairness exposure can originate before the model even runs. Testing must extend to the inputs a model relies on, not just the outputs it produces.

3. Human Oversight Built In, Not Bolted On

There must be documented checkpoints at which human reviewers can intervene before consequential decisions take effect. The specific threshold must itself be auditable because regulators will ask not only whether humans were involved, but also how, when, and under what conditions.

4. GenAI and Agentic Controls as Prerequisites

Hallucination detection, PII masking, tool-call audit logs, and human approval gates for consequential actions must be in place before any generative or agentic deployment touches an externally visible workflow. Carriers that deploy these systems before foundational controls are operational consistently find themselves with ungoverned models in production and no audit trail to produce when the examiner arrives.

5. Audit Readiness and Real-Time Visibility as the Final Gate

Every AI-driven decision must be backed by a complete, replayable record, including input data, model version, confidence score, output, and the identity of any human reviewer, that can be produced on demand. Building toward real-time monitoring and board-level visibility, with continuous drift detection and live bias monitoring, is what separates carriers that govern AI as designed from those that govern it only as documented.

Carriers that build through this sequence move faster, automate more broadly, and earn regulatory trust before the examiner arrives.

Guardrails as a Path, Not an Obstacle

AI guardrails in P&C insurance are an operational requirement in this era of innovation. The regulatory environment is moving, the litigation risk is real, and the carriers that treat governance as an afterthought are accumulating exposure with every model deployed.

This framework — four layers of control architecture, five governance gates, and a deliberate implementation sequence — allows carriers to deploy AI confidently, pass DOI market conduct examinations, and scale automation without catastrophic legal or regulatory exposure. Guardrails are not the cost of deploying AI: They are the infrastructure that makes deployment possible.

For carriers ready to build a governance program that holds up under regulatory scrutiny, ValueMomentum’s Insurer’s Generative AI Handbook provides a practical guide to deploying AI responsibly across the P&C insurance value chain.